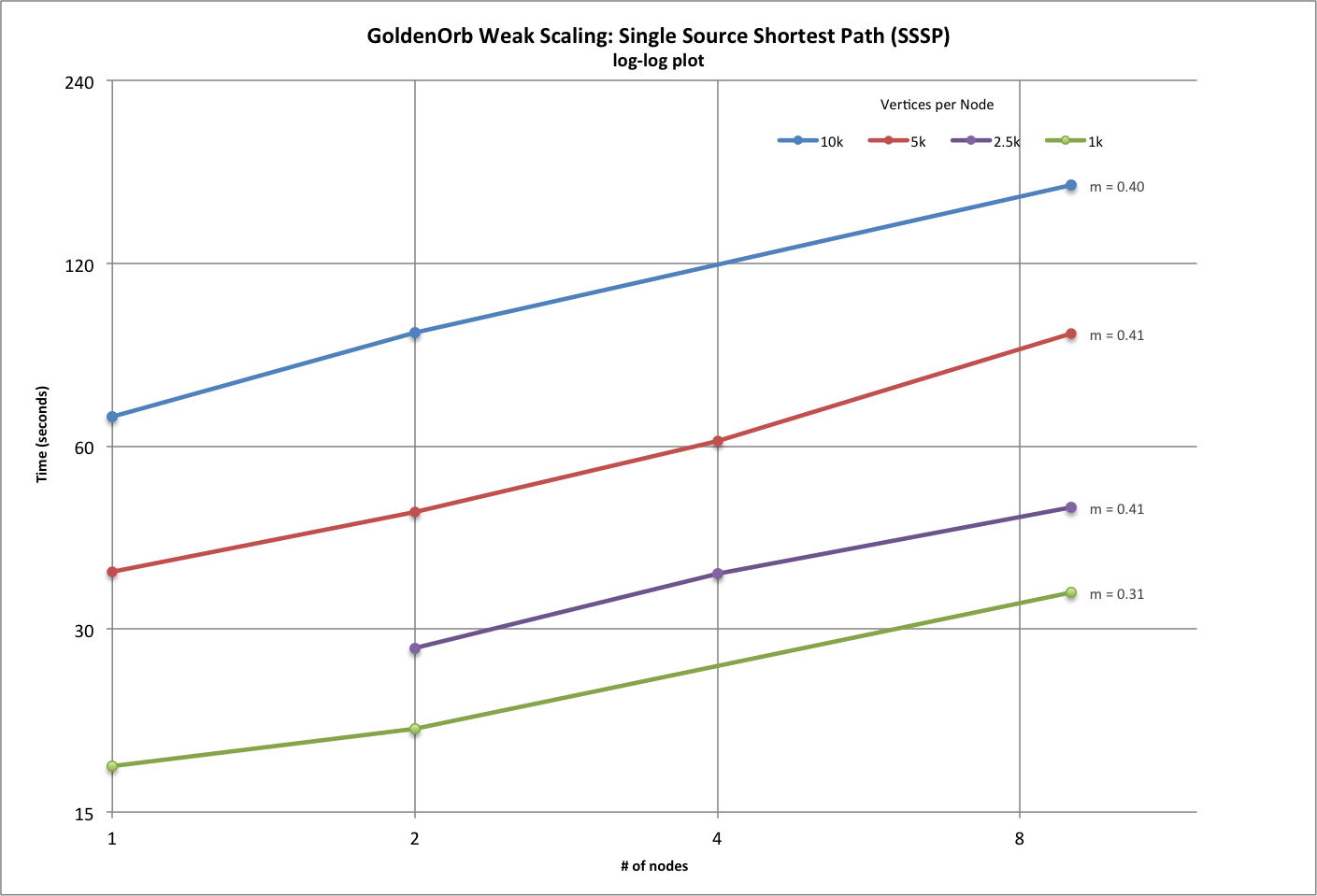

| Weak Scaling Results (SSSP) | ||||||||||

| # vertices per node | 250 | 500 | 1000 | 2500 | 5000 | 10000 | 12500 | 25000 | 50000 | |

| power (0.0 optimal) | 0.408245144 | 0.231128758 | 0.307090584 | 0.353851806 | 0.410556488 | 0.395686489 | 0.479227847 | 0.323467824 | 0.385464748 | |

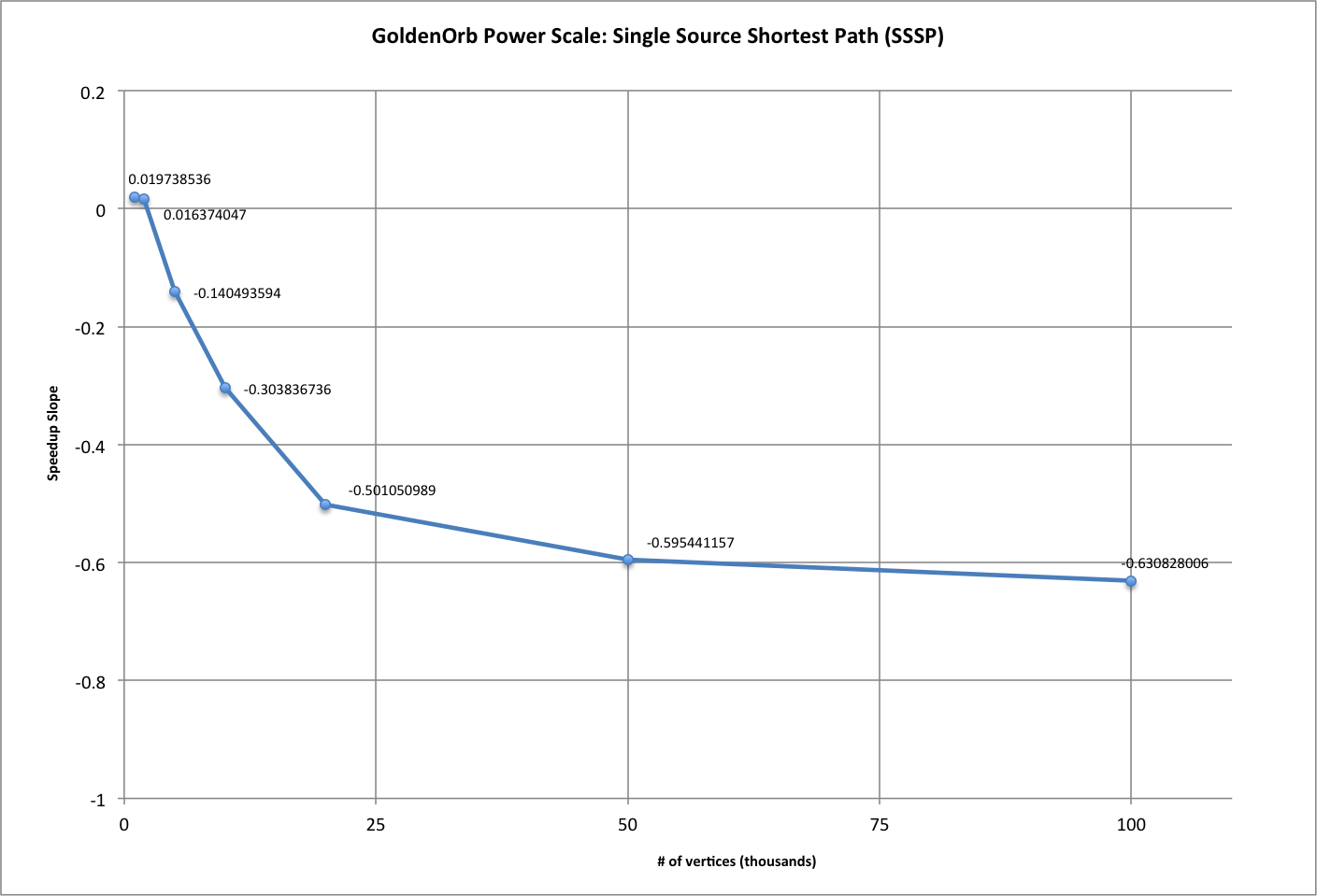

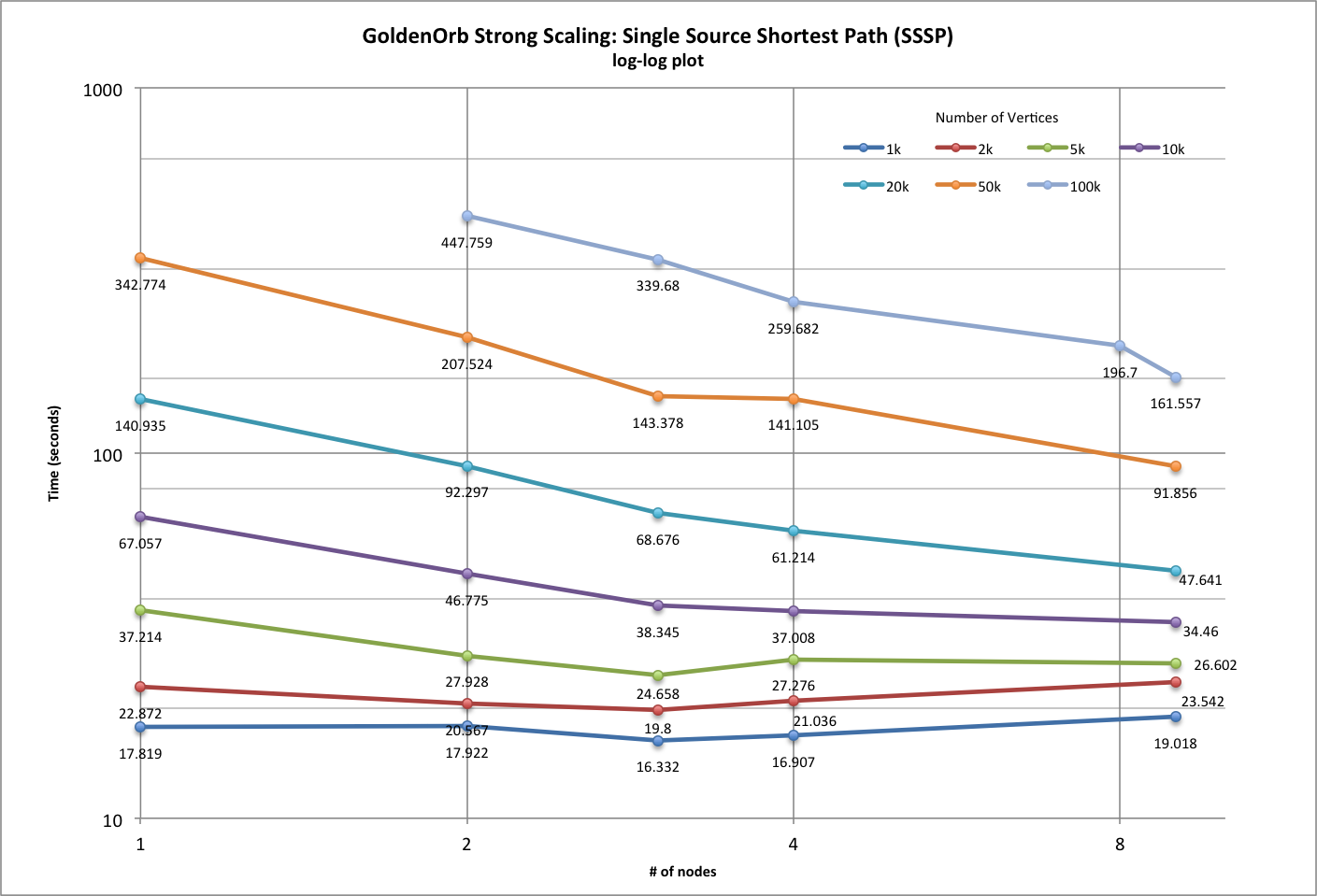

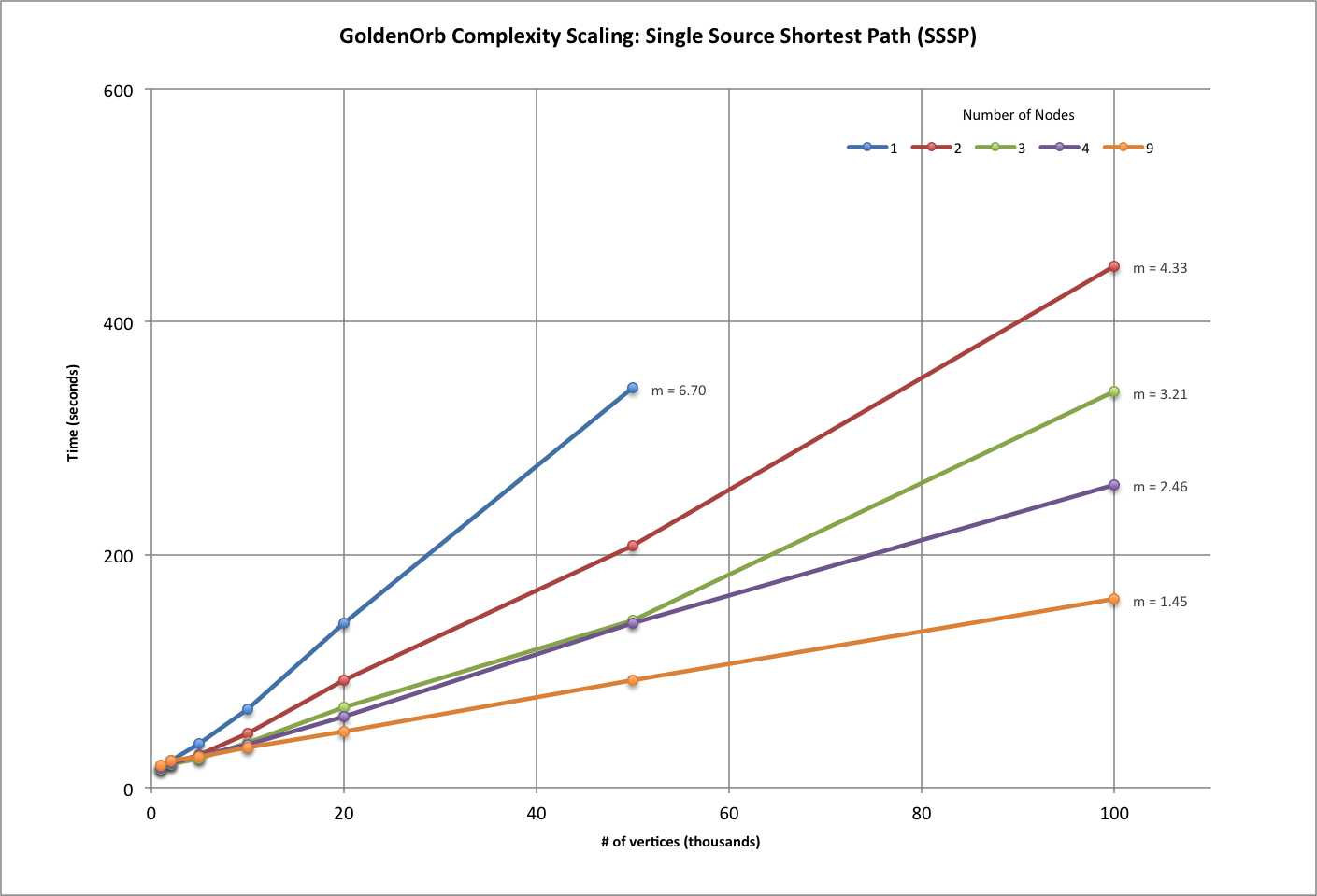

| Strong Scaling Results (SSSP) | ||||||||||

| # vertices per cluster | 1000 | 2000 | 5000 | 10000 | 20000 | 50000 | 100000 | |||

| power (-1.0 optimal) | 0.019738536 | 0.016374047 | -0.140493594 | -0.303836736 | -0.501050989 | -0.595441157 | -0.630828006 | |||

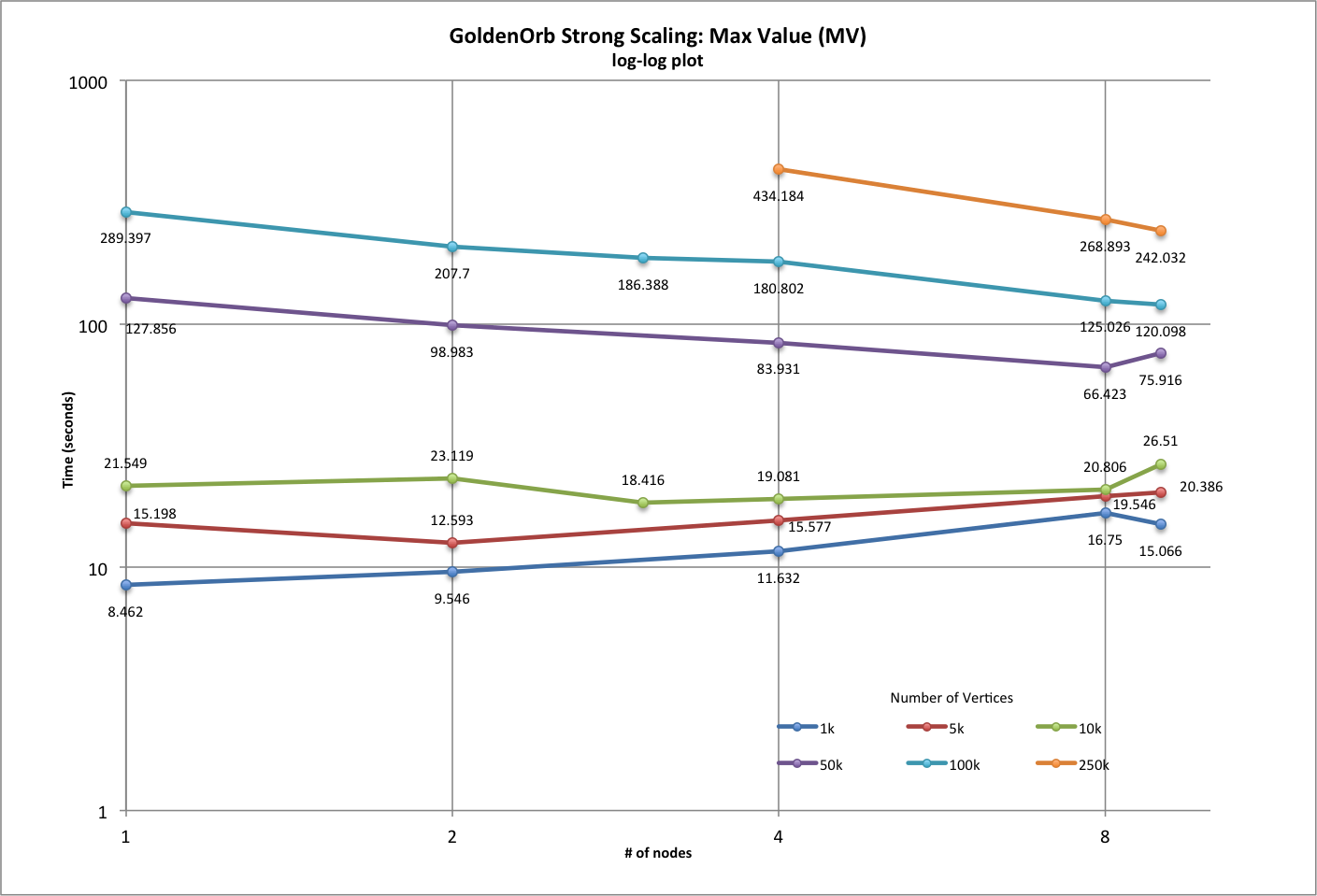

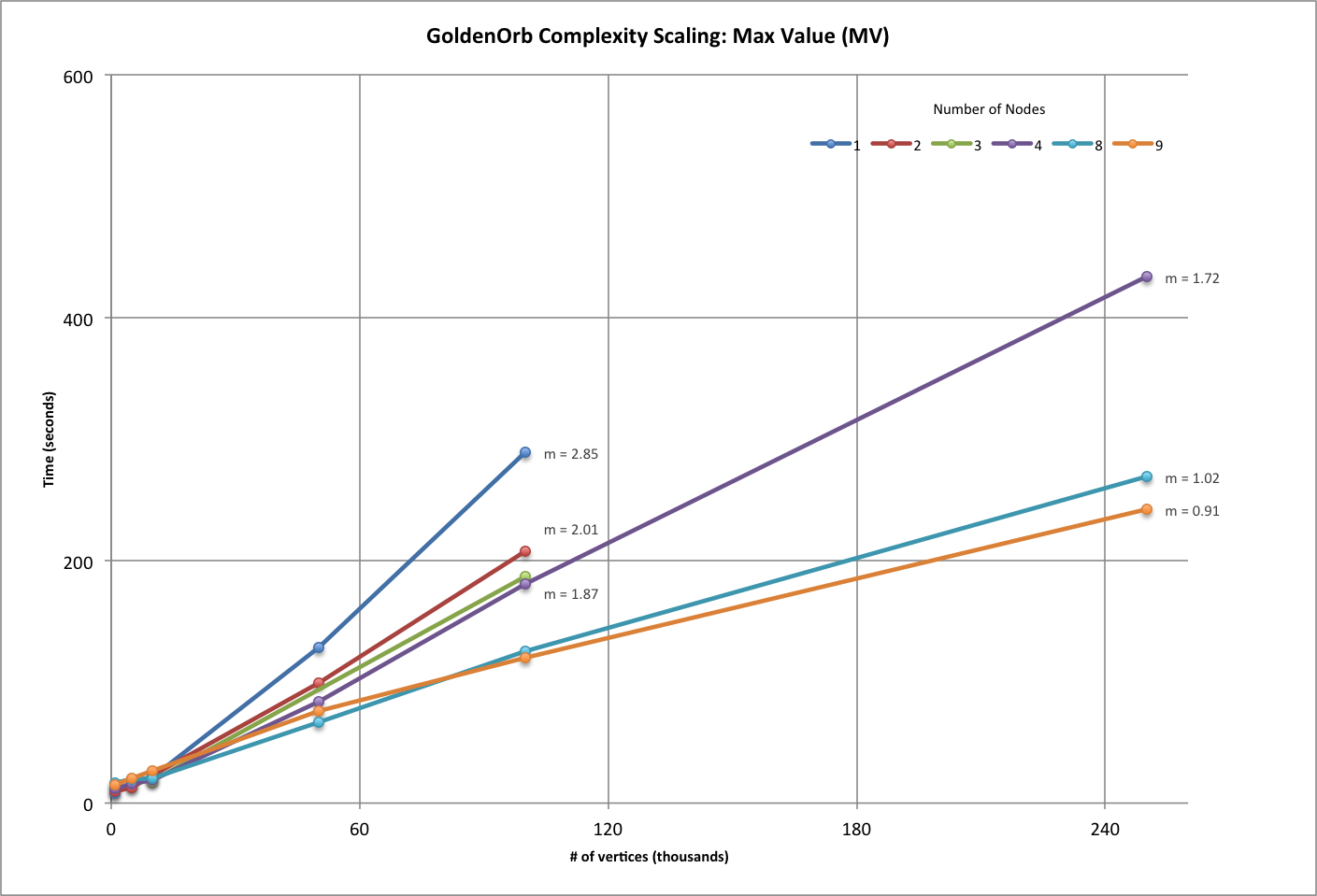

| Strong Scaling Results (MV) | ||||||||||

| # vertices per cluster | 1000 | 5000 | 10000 | 50000 | 100000 | 250000 | ||||

| power (-1.0 optimal) | 0.303088931 | 0.168915436 | 0.038561803 | -0.262642144 | -0.389931207 | -0.710481122 | ||||